- Blog

- Cnet konami winning eleven 9 free download

- Aralia ming

- Project 64 controller plugin for gge909 pc recoil pad

- Nexus 6 oxygen os 2017

- Zero shinku no chou english patch

- Hot shot casino promo code generator activation code

- Parallels free

- Bubblegum crisis torrent

- Lakshmi tamil movie 2018

- Foto interni panda 4x4 cross

- Lagu natal christmas song

- Slic loader legacy usb issue

- Radiant definition

- Gloomhaven organizer siem

- Will take the dpf off of a 2010 6-7 cummins add mpg

- Nick carter novel dewasa

- Scatter chart excel for mac

- Can you run grbl on raspberry pi

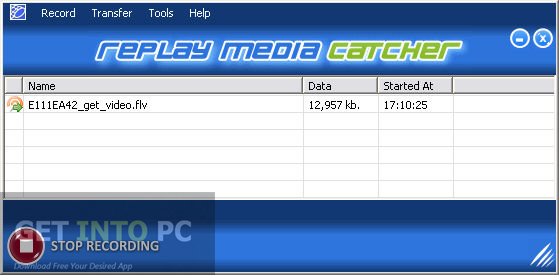

- Replay media catcher opening magnet links

- Blog

- Cnet konami winning eleven 9 free download

- Aralia ming

- Project 64 controller plugin for gge909 pc recoil pad

- Nexus 6 oxygen os 2017

- Zero shinku no chou english patch

- Hot shot casino promo code generator activation code

- Parallels free

- Bubblegum crisis torrent

- Lakshmi tamil movie 2018

- Foto interni panda 4x4 cross

- Lagu natal christmas song

- Slic loader legacy usb issue

- Radiant definition

- Gloomhaven organizer siem

- Will take the dpf off of a 2010 6-7 cummins add mpg

- Nick carter novel dewasa

- Scatter chart excel for mac

- Can you run grbl on raspberry pi

- Replay media catcher opening magnet links

Loci uses voice, gaze and gestures to enable you to make your 3D graphs (mind maps). Loci supports spatial mapping so you can place nodes from your mind maps in relevant locations in your memory palace.

Replay media catcher opening magnet links windows#

How - Loci is built for Windows Mixed Reality. In this way you can organize your problem, understand it, and remember it later when you need to use that information for decisions. Why - Loci uses three core concepts to help you organize, understand and recall mind maps lets you break a problem down into component parts to organize and do analysis, mixed reality interaction with mind maps lets you visualize in 3D to understand how they are related, and the method of loci persistent placement of mind map nodes lets you improve your recall of the mind map, even when you are not using the Loci software. Loci features initial import capability for MindManager, Freemind, and GraphML files. These tasks can include looking for an apartment, finding out more about a disease, or deciding on a next job. It combines the method of loci memory technique and 3D mixed reality interaction with mind maps (graphs) to support you on your personal analysis tasks. Loci is a method of loci mixed reality mind mapping application located around you. The application makes use of the Mixed Reality Toolkit for Unity and the UnityGLTF projects in order to read glTF files. The application relies solely on local networking technology and does not involve the setup of an external server nor does it make use of cloud services. This means that large, complex models are likely to suffer poor performance unless pre-processed to reduce complexity. The application makes no attempt to alter the models you give it to suit the mobile processing capability of the HoloLens. If you have models in formats other than glTF then you can use utilities such as Paint3D to convert to glTF and save as. The user is always able to scale, rotate, translate and remove the models that they opened & the results of those operations are synchronised to other viewers. This 'sharing' feature allows for a group of users on a network to each have separate scenes made up of models that they have opened locally on a device intermingled with models which were originally opened by other users on other devices. Such a "shared" model will be displayed in the same physical location & updates to the model on the originating device will be replicated to all other viewers sharing that model. On a compatible network setup, when one user opens a model any other glTF Viewer users will be notified and offered a chance to bring this newly opened model into their scene. With your focus on a specific model, you can use the "reset" voice command to put it back to its original location and the "remove" voice command to delete it from your scene. You can open as many models as you like, the device will display them around 2m in front of you following your gaze and, once opened, you can use the regular one- and two-handed gestures to scale, rotate and move the model around in space. You should be able to load any models packaged as either single-files (.GLB) or multiple-files (.GLTF). Then run "glTF Viewer" and use the "open" voice command to choose the model that you want to display.

Replay media catcher opening magnet links Pc#

To get your glTF files onto your HoloLens, simply attach to a PC and use the built-in Windows support to copy your model files (.GLB/.GLTF) onto the storage on the device taking care to place them somewhere within the '3D Objects' folder. An application to view glTF (TM) files on the HoloLens.